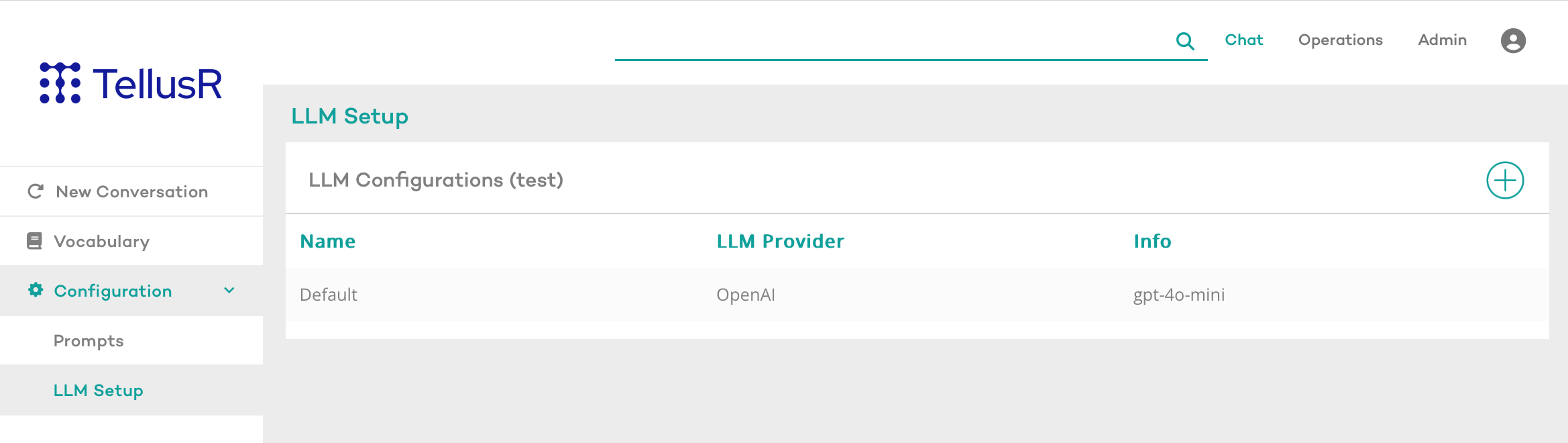

LLM Setup

The purpose of this widget is to allow users to configure which Large Language Model (LLM) will be used in the assistants they create within TellusR. The system comes with a predefined default configuration, but users can also create additional configurations as needed.

To configure available LLM(s) for a specific assistant, select that assistant in the sidebar menu, and navigate to Configuration > LLM Setup.

Default LLM Configuration

TellusR provides a default LLM configuration. If a default LLM model and token are specified in the TellusR backend configuration, they will automatically be applied as the default settings in this widget (see LLM Chat and RAG for details). Otherwise, users can manually configure these settings here.

Users have the flexibility to add additional LLM configurations to accommodate different requirements, ensuring adaptability to various use cases.

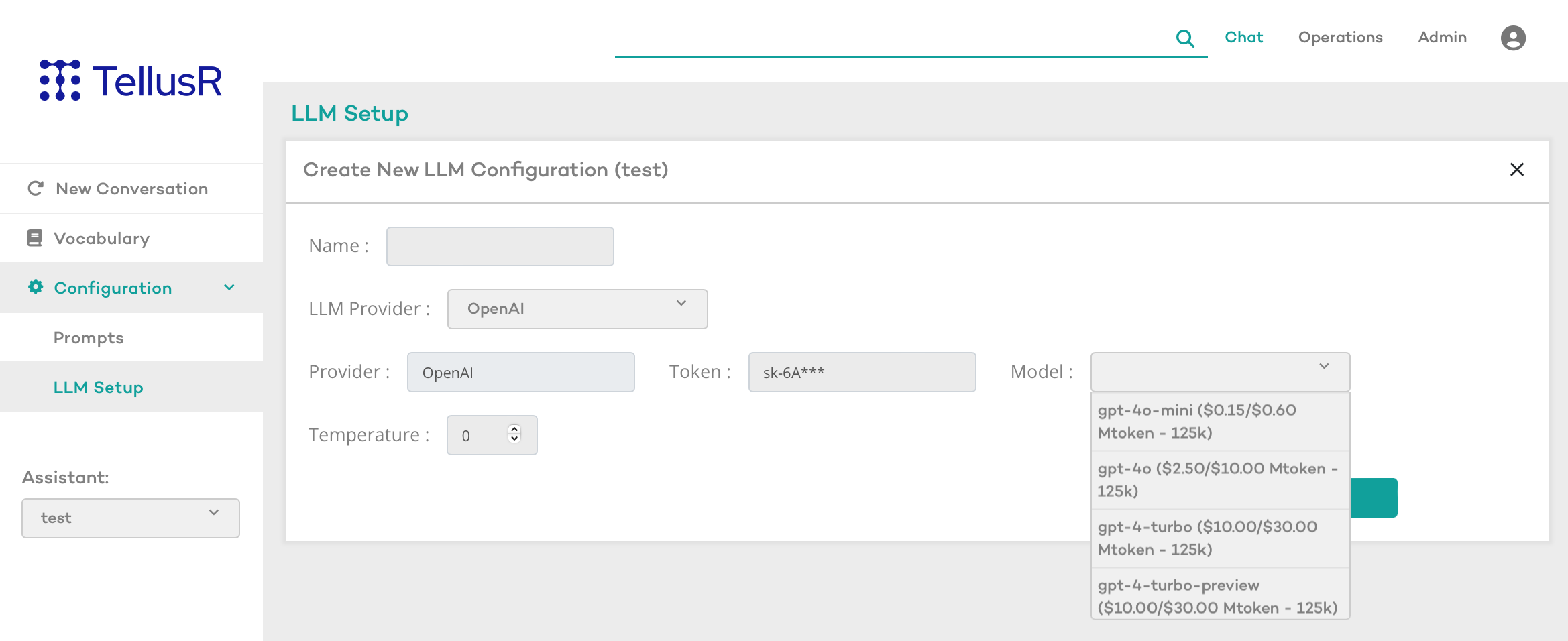

Creating a New LLM Configuration

-

Go to Configuration > LLM Setup.

-

Click the “+” icon in the top right corner to add a new configuration.

-

Fill in the required details:

-

Name: Provide a descriptive name for the configuration.

-

LLM Provider: Select the desired provider (e.g., OpenAI).

-

Token: Enter the API key to access the model.

-

Model: Choose one of the available models from TellusR’s suggestions (e.g., GPT-4-mini, GPT-4-turbo, etc.).

-

Temperature: Adjust the temperature setting to influence the creativity and variation in generated responses.

-

-

Click Save to store the configuration.

Managing LLM Configurations

To modify an existing configuration, go to LLM Setup, select the desired configuration, and edit the settings.

To delete a configuration, click the “DELETE”-button in the LLM Configuration page. Note that the Default-configuration can not be deleted.

Accessing Additional LLM Models

If you wish to use an LLM that is not available in the interface, please contact TellusR support to explore additional options.

For any questions or assistance, reach out to TellusR support for more information.